LAS VEGAS — Intel showed off a lot of cool technology last week at the 2014 International CES. CEO Brian Krzanich gave a keynote speech, while Mooly Eden, the senior vice president of perceptual computing and president of Intel Israel, held a press conference to show far Intel has come with perceptual computing, or using gestures and image recognition to control a computer.

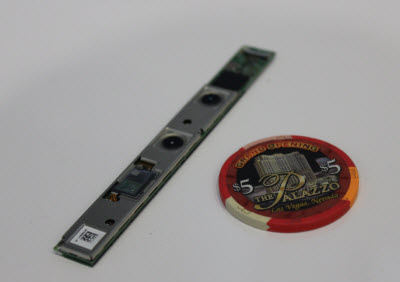

I sat down with Eden a day after his presentation and quizzed him about the RealSense 3D camera, which can recognize gestures and finger movements. Intel plans to build an inexpensive version of the camera into laptops and other computing devices starting in the second half of 2014. Intel has a lot at stake in the project, as it hopes this will inject new life into the PC market.

Eden said that Intel is making big investments in both technology and content to make perceptual computing real. He also told us that Microsoft isn’t the enemy despite Intel’s support for dual-boot Windows and Android computers as well as its support for Valve’s Linux-based Steam Machines.

Here’s an edited transcript of our interview with Eden at CES.

VentureBeat: You debuted the RealSense 3D depth camera at your press conference. Was that difficult for Intel to create?

Eden: This is the first time we went public with the camera. No, a piece of cake. [Laughs] If you compare this to this, you can imagine — any of these things are proprietary, like the laser. It was very complicated to develop. We were trying to break some barriers. We were trying to defy the laws of physics, the laws of optics. We still have a lot of things to prove and move forward.

There’s good news and bad news. The bad news is that it’s very complicated. The good news is that it’s very complicated, so it won’t be easy [for competitors] to close the gap.

VentureBeat: It sounds like putting 2D and 3D together was another challenge.

Eden: No, that’s not a problem. If you’re trying to look at 2D and 3D, this is an infrared camera, and this is standard RGB. RGB is a piece of cake. We know how to take a picture. The problem is when you have that picture and what you call the point cloud. Then you need to take the 2D camera, which is totally different, and put it over the 3D and make sure it matches exactly. That’s what we call texture mapping. It’s not simple. If something heats up or changes, or if this isn’t quite rigid enough to keep it in calibration — We have a lot of challenges.

VentureBeat: Is the vision for perceptual computing similar to Microsoft’s Kinect?

Eden: No, totally different. Kinect was a great solution. I salute them for being the first to develop it. But that was more long-range. We’re doing a much closer-range camera, capturing fine detail, doing finger tracking. We have the full depth, compared to other solutions, in order to track your hands and be able to do very fine segmentation. We can extract your face out of the background.

I’d like to see more complementary efforts from Microsoft. We’re going to try to co-develop and cooperate and see what we can do with Skype and things like that. We have different plans for usage.

VentureBeat: It seems like you have a big job ahead as far as getting applications on it.

Eden: Definitely. That’s the biggest challenge. We announced a lot of collaboration [at CES]. We have the right budget to invest and make it happen. We’ve already released the SDK. Once we start ramping up to a bigger installed base, we expect the developer community to use the SDK and the whole thing will feed itself. But it’ll need a little jump-start.

VentureBeat: When I look at some of the demos, it seems like they’re somewhat rough. You can see the outlines of the person in the green-screen effect where you put them into a new background.

Eden: It’s still not production, no. Some of the examples we’re showing use very complicated algorithms. Some of them are much easier than others. Some of them we’re still working on. Not all of them are done. But we still have time. We need to refine things. It’ll improve as we go forward.

VentureBeat: When you’re able to launch this, do you think you’ll have a set of applications ready?

Eden: Definitely. We can’t launch without applications. How do you sell hardware if you can’t show software, if you can’t show the user experience? What are you buying? We need a set of compelling applications so people will want to use it, and then we can say, “By the way, we’ll have more and more as we go forward.”

VentureBeat: What are some of the other things coming along, like eye-tracking?

Eden: We didn’t comment on eye tracking. It’s a very interesting technology. We’re looking into it. But officially, we haven’t said anything. It could be part of the overall Perceptual Computing effort, because if I know where you’re looking, that’s important information. I can deliver data based on your interest. But for the time being, we haven’t announced anything regarding eye tracking.

VentureBeat: Among the things that Brian Krzanich showed in his keynote speech, were there some perceptual computing projects, such as the gaming thing?

Eden: The scavenger, the guy that’s going all over like this? That was done by our team, yeah. We were using the system in order to demonstrate the capabilities of what we think can be done. It’s a different usage, because it’s not in your face. You’re looking at the world.

VentureBeat: Was the Leviathan whale demo part of your project as well?

Eden: We’re part of it. What you saw there is augmented reality. It’s done by Interlab, at one of the universities, and our team in Israel. The idea was, I take the tablet and I look at you. Through the tablet and I see the real world. Now I see augmented things running that you don’t see as part of the picture on the screen. What we couldn’t demonstrate is the integration of 2D. When you go around, you see the world from a different place.

VentureBeat: Everyone was looking for the whale in the real world.

Eden: Try to imagine your kids playing a game, where suddenly you bring the whole universe to life. Think about education. I’m trying to educate people about the whale, and suddenly I just show it to them. Or a dinosaur. You can go exploring by yourself. If you want to know what it looks inside, you could even get closer and just move inside it.

The idea was just to whet your appetite and show some of the opportunities. Eventually, we’re going to release the SDK and harness all this great innovation around us.